It seems that Americans are in the midst of a raging epidemic of mental illness, at least as judged by the increase in the numbers treated for it. The tally of those who are so disabled by mental disorders that they qualify for Supplemental Security Income (SSI) or Social Security Disability Insurance (SSDI) increased nearly two and a half times between 1987 and 2007—from one in 184 Americans to one in seventy-six. For children, the rise is even more startling—a thirty-five-fold increase in the same two decades. Mental illness is now the leading cause of disability in children, well ahead of physical disabilities like cerebral palsy or Down syndrome, for which the federal programs were created.

A large survey of randomly selected adults, sponsored by the National Institute of Mental Health (NIMH) and conducted between 2001 and 2003, found that an astonishing 46 percent met criteria established by the American Psychiatric Association (APA) for having had at least one mental illness within four broad categories at some time in their lives. The categories were “anxiety disorders,” including, among other subcategories, phobias and post-traumatic stress disorder (PTSD); “mood disorders,” including major depression and bipolar disorders; “impulse-control disorders,” including various behavioral problems and attention-deficit/hyperactivity disorder (ADHD); and “substance use disorders,” including alcohol and drug abuse. Most met criteria for more than one diagnosis. Of a subgroup affected within the previous year, a third were under treatment—up from a fifth in a similar survey ten years earlier.

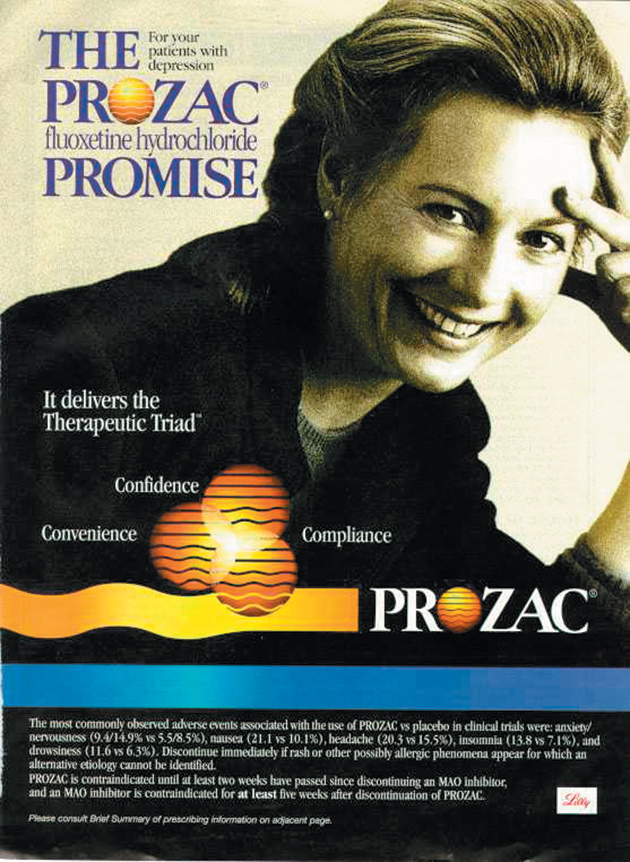

Nowadays treatment by medical doctors nearly always means psychoactive drugs, that is, drugs that affect the mental state. In fact, most psychiatrists treat only with drugs, and refer patients to psychologists or social workers if they believe psychotherapy is also warranted. The shift from “talk therapy” to drugs as the dominant mode of treatment coincides with the emergence over the past four decades of the theory that mental illness is caused primarily by chemical imbalances in the brain that can be corrected by specific drugs. That theory became broadly accepted, by the media and the public as well as by the medical profession, after Prozac came to market in 1987 and was intensively promoted as a corrective for a deficiency of serotonin in the brain. The number of people treated for depression tripled in the following ten years, and about 10 percent of Americans over age six now take antidepressants. The increased use of drugs to treat psychosis is even more dramatic. The new generation of antipsychotics, such as Risperdal, Zyprexa, and Seroquel, has replaced cholesterol-lowering agents as the top-selling class of drugs in the US.

What is going on here? Is the prevalence of mental illness really that high and still climbing? Particularly if these disorders are biologically determined and not a result of environmental influences, is it plausible to suppose that such an increase is real? Or are we learning to recognize and diagnose mental disorders that were always there? On the other hand, are we simply expanding the criteria for mental illness so that nearly everyone has one? And what about the drugs that are now the mainstay of treatment? Do they work? If they do, shouldn’t we expect the prevalence of mental illness to be declining, not rising?

These are the questions, among others, that concern the authors of the three provocative books under review here. They come at the questions from different backgrounds—Irving Kirsch is a psychologist at the University of Hull in the UK, Robert Whitaker a journalist and previously the author of a history of the treatment of mental illness called Mad in America (2001), and Daniel Carlat a psychiatrist who practices in a Boston suburb and publishes a newsletter and blog about his profession.

The authors emphasize different aspects of the epidemic of mental illness. Kirsch is concerned with whether antidepressants work. Whitaker, who has written an angrier book, takes on the entire spectrum of mental illness and asks whether psychoactive drugs create worse problems than they solve. Carlat, who writes more in sorrow than in anger, looks mainly at how his profession has allied itself with, and is manipulated by, the pharmaceutical industry. But despite their differences, all three are in remarkable agreement on some important matters, and they have documented their views well.

First, they agree on the disturbing extent to which the companies that sell psychoactive drugs—through various forms of marketing, both legal and illegal, and what many people would describe as bribery—have come to determine what constitutes a mental illness and how the disorders should be diagnosed and treated. This is a subject to which I’ll return.

Second, none of the three authors subscribes to the popular theory that mental illness is caused by a chemical imbalance in the brain. As Whitaker tells the story, that theory had its genesis shortly after psychoactive drugs were introduced in the 1950s. The first was Thorazine (chlorpromazine), which was launched in 1954 as a “major tranquilizer” and quickly found widespread use in mental hospitals to calm psychotic patients, mainly those with schizophrenia. Thorazine was followed the next year by Miltown (meprobamate), sold as a “minor tranquilizer” to treat anxiety in outpatients. And in 1957, Marsilid (iproniazid) came on the market as a “psychic energizer” to treat depression.

Advertisement

In the space of three short years, then, drugs had become available to treat what at that time were regarded as the three major categories of mental illness—psychosis, anxiety, and depression—and the face of psychiatry was totally transformed. These drugs, however, had not initially been developed to treat mental illness. They had been derived from drugs meant to treat infections, and were found only serendipitously to alter the mental state. At first, no one had any idea how they worked. They simply blunted disturbing mental symptoms. But over the next decade, researchers found that these drugs, and the newer psychoactive drugs that quickly followed, affected the levels of certain chemicals in the brain.

Some brief—and necessarily quite simplified—background: the brain contains billions of nerve cells, called neurons, arrayed in immensely complicated networks and communicating with one another constantly. The typical neuron has multiple filamentous extensions, one called an axon and the others called dendrites, through which it sends and receives signals from other neurons. For one neuron to communicate with another, however, the signal must be transmitted across the tiny space separating them, called a synapse. To accomplish that, the axon of the sending neuron releases a chemical, called a neurotransmitter, into the synapse. The neurotransmitter crosses the synapse and attaches to receptors on the second neuron, often a dendrite, thereby activating or inhibiting the receiving cell. Axons have multiple terminals, so each neuron has multiple synapses. Afterward, the neurotransmitter is either reabsorbed by the first neuron or metabolized by enzymes so that the status quo ante is restored. There are exceptions and variations to this story, but that is the usual way neurons communicate with one another.

When it was found that psychoactive drugs affect neurotransmitter levels in the brain, as evidenced mainly by the levels of their breakdown products in the spinal fluid, the theory arose that the cause of mental illness is an abnormality in the brain’s concentration of these chemicals that is specifically countered by the appropriate drug. For example, because Thorazine was found to lower dopamine levels in the brain, it was postulated that psychoses like schizophrenia are caused by too much dopamine. Or later, because certain antidepressants increase levels of the neurotransmitter serotonin in the brain, it was postulated that depression is caused by too little serotonin. (These antidepressants, like Prozac or Celexa, are called selective serotonin reuptake inhibitors (SSRIs) because they prevent the reabsorption of serotonin by the neurons that release it, so that more remains in the synapses to activate other neurons.) Thus, instead of developing a drug to treat an abnormality, an abnormality was postulated to fit a drug.

That was a great leap in logic, as all three authors point out. It was entirely possible that drugs that affected neurotransmitter levels could relieve symptoms even if neurotransmitters had nothing to do with the illness in the first place (and even possible that they relieved symptoms through some other mode of action entirely). As Carlat puts it, “By this same logic one could argue that the cause of all pain conditions is a deficiency of opiates, since narcotic pain medications activate opiate receptors in the brain.” Or similarly, one could argue that fevers are caused by too little aspirin.

But the main problem with the theory is that after decades of trying to prove it, researchers have still come up empty-handed. All three authors document the failure of scientists to find good evidence in its favor. Neurotransmitter function seems to be normal in people with mental illness before treatment. In Whitaker’s words:

Prior to treatment, patients diagnosed with schizophrenia, depression, and other psychiatric disorders do not suffer from any known “chemical imbalance.” However, once a person is put on a psychiatric medication, which, in one manner or another, throws a wrench into the usual mechanics of a neuronal pathway, his or her brain begins to function…abnormally.

Carlat refers to the chemical imbalance theory as a “myth” (which he calls “convenient” because it destigmatizes mental illness), and Kirsch, whose book focuses on depression, sums up this way: “It now seems beyond question that the traditional account of depression as a chemical imbalance in the brain is simply wrong.” Why the theory persists despite the lack of evidence is a subject I’ll come to.

Do the drugs work? After all, regardless of the theory, that is the practical question. In his spare, remarkably engrossing book, The Emperor’s New Drugs, Kirsch describes his fifteen-year scientific quest to answer that question about antidepressants. When he began his work in 1995, his main interest was in the effects of placebos. To study them, he and a colleague reviewed thirty-eight published clinical trials that compared various treatments for depression with placebos, or compared psychotherapy with no treatment. Most such trials last for six to eight weeks, and during that time, patients tend to improve somewhat even without any treatment. But Kirsch found that placebos were three times as effective as no treatment. That didn’t particularly surprise him. What did surprise him was the fact that antidepressants were only marginally better than placebos. As judged by scales used to measure depression, placebos were 75 percent as effective as antidepressants. Kirsch then decided to repeat his study by examining a more complete and standardized data set.

Advertisement

The data he used were obtained from the US Food and Drug Administration (FDA) instead of the published literature. When drug companies seek approval from the FDA to market a new drug, they must submit to the agency all clinical trials they have sponsored. The trials are usually double-blind and placebo-controlled, that is, the participating patients are randomly assigned to either drug or placebo, and neither they nor their doctors know which they have been assigned. The patients are told only that they will receive an active drug or a placebo, and they are also told of any side effects they might experience. If two trials show that the drug is more effective than a placebo, the drug is generally approved. But companies may sponsor as many trials as they like, most of which could be negative—that is, fail to show effectiveness. All they need is two positive ones. (The results of trials of the same drug can differ for many reasons, including the way the trial is designed and conducted, its size, and the types of patients studied.)

For obvious reasons, drug companies make very sure that their positive studies are published in medical journals and doctors know about them, while the negative ones often languish unseen within the FDA, which regards them as proprietary and therefore confidential. This practice greatly biases the medical literature, medical education, and treatment decisions.

Kirsch and his colleagues used the Freedom of Information Act to obtain FDA reviews of all placebo-controlled clinical trials, whether positive or negative, submitted for the initial approval of the six most widely used antidepressant drugs approved between 1987 and 1999—Prozac, Paxil, Zoloft, Celexa, Serzone, and Effexor. This was a better data set than the one used in his previous study, not only because it included negative studies but because the FDA sets uniform quality standards for the trials it reviews and not all of the published research in Kirsch’s earlier study had been submitted to the FDA as part of a drug approval application.

Altogether, there were forty-two trials of the six drugs. Most of them were negative. Overall, placebos were 82 percent as effective as the drugs, as measured by the Hamilton Depression Scale (HAM-D), a widely used score of symptoms of depression. The average difference between drug and placebo was only 1.8 points on the HAM-D, a difference that, while statistically significant, was clinically meaningless. The results were much the same for all six drugs: they were all equally unimpressive. Yet because the positive studies were extensively publicized, while the negative ones were hidden, the public and the medical profession came to believe that these drugs were highly effective antidepressants.

Kirsch was also struck by another unexpected finding. In his earlier study and in work by others, he observed that even treatments that were not considered to be antidepressants—such as synthetic thyroid hormone, opiates, sedatives, stimulants, and some herbal remedies—were as effective as antidepressants in alleviating the symptoms of depression. Kirsch writes, “When administered as antidepressants, drugs that increase, decrease or have no effect on serotonin all relieve depression to about the same degree.” What all these “effective” drugs had in common was that they produced side effects, which participating patients had been told they might experience.

It is important that clinical trials, particularly those dealing with subjective conditions like depression, remain double-blind, with neither patients nor doctors knowing whether or not they are getting a placebo. That prevents both patients and doctors from imagining improvements that are not there, something that is more likely if they believe the agent being administered is an active drug instead of a placebo. Faced with his findings that nearly any pill with side effects was slightly more effective in treating depression than an inert placebo, Kirsch speculated that the presence of side effects in individuals receiving drugs enabled them to guess correctly that they were getting active treatment—and this was borne out by interviews with patients and doctors—which made them more likely to report improvement. He suggests that the reason antidepressants appear to work better in relieving severe depression than in less severe cases is that patients with severe symptoms are likely to be on higher doses and therefore experience more side effects.

To further investigate whether side effects bias responses, Kirsch looked at some trials that employed “active” placebos instead of inert ones. An active placebo is one that itself produces side effects, such as atropine—a drug that selectively blocks the action of certain types of nerve fibers. Although not an antidepressant, atropine causes, among other things, a noticeably dry mouth. In trials using atropine as the placebo, there was no difference between the antidepressant and the active placebo. Everyone had side effects of one type or another, and everyone reported the same level of improvement. Kirsch reported a number of other odd findings in clinical trials of antidepressants, including the fact that there is no dose-response curve—that is, high doses worked no better than low ones—which is extremely unlikely for truly effective drugs. “Putting all this together,” writes Kirsch,

leads to the conclusion that the relatively small difference between drugs and placebos might not be a real drug effect at all. Instead, it might be an enhanced placebo effect, produced by the fact that some patients have broken [the] blind and have come to realize whether they were given drug or placebo. If this is the case, then there is no real antidepressant drug effect at all. Rather than comparing placebo to drug, we have been comparing “regular” placebos to “extra-strength” placebos.

That is a startling conclusion that flies in the face of widely accepted medical opinion, but Kirsch reaches it in a careful, logical way. Psychiatrists who use antidepressants—and that’s most of them—and patients who take them might insist that they know from clinical experience that the drugs work. But anecdotes are known to be a treacherous way to evaluate medical treatments, since they are so subject to bias; they can suggest hypotheses to be studied, but they cannot prove them. That is why the development of the double-blind, randomized, placebo-controlled clinical trial in the middle of the past century was such an important advance in medical science. Anecdotes about leeches or laetrile or megadoses of vitamin C, or any number of other popular treatments, could not stand up to the scrutiny of well-designed trials. Kirsch is a faithful proponent of the scientific method, and his voice therefore brings a welcome objectivity to a subject often swayed by anecdotes, emotions, or, as we will see, self-interest.

Whitaker’s book is broader and more polemical. He considers all mental illness, not just depression. Whereas Kirsch concludes that antidepressants are probably no more effective than placebos, Whitaker concludes that they and most of the other psychoactive drugs are not only ineffective but harmful. He begins by observing that even as drug treatment for mental illness has skyrocketed, so has the prevalence of the conditions treated:

The number of disabled mentally ill has risen dramatically since 1955, and during the past two decades, a period when the prescribing of psychiatric medications has exploded, the number of adults and children disabled by mental illness has risen at a mind-boggling rate. Thus we arrive at an obvious question, even though it is heretical in kind: Could our drug-based paradigm of care, in some unforeseen way, be fueling this modern-day plague?

Moreover, Whitaker contends, the natural history of mental illness has changed. Whereas conditions such as schizophrenia and depression were once mainly self-limited or episodic, with each episode usually lasting no more than six months and interspersed with long periods of normalcy, the conditions are now chronic and lifelong. Whitaker believes that this might be because drugs, even those that relieve symptoms in the short term, cause long-term mental harms that continue after the underlying illness would have naturally resolved.

The evidence he marshals for this theory varies in quality. He doesn’t sufficiently acknowledge the difficulty of studying the natural history of any illness over a fifty-some-year time span during which many circumstances have changed, in addition to drug use. It is even more difficult to compare long-term outcomes in treated versus untreated patients, since treatment may be more likely in those with more severe disease at the outset. Nevertheless, Whitaker’s evidence is suggestive, if not conclusive.

If psychoactive drugs do cause harm, as Whitaker contends, what is the mechanism? The answer, he believes, lies in their effects on neurotransmitters. It is well understood that psychoactive drugs disturb neurotransmitter function, even if that was not the cause of the illness in the first place. Whitaker describes a chain of effects. When, for example, an SSRI antidepressant like Celexa increases serotonin levels in synapses, it stimulates compensatory changes through a process called negative feedback. In response to the high levels of serotonin, the neurons that secrete it (presynaptic neurons) release less of it, and the postsynaptic neurons become desensitized to it. In effect, the brain is trying to nullify the drug’s effects. The same is true for drugs that block neurotransmitters, except in reverse. For example, most antipsychotic drugs block dopamine, but the presynaptic neurons compensate by releasing more of it, and the postsynaptic neurons take it up more avidly. (This explanation is necessarily oversimplified, since many psychoactive drugs affect more than one of the many neurotransmitters.)

With long-term use of psychoactive drugs, the result is, in the words of Steve Hyman, a former director of the NIMH and until recently provost of Harvard University, “substantial and long-lasting alterations in neural function.” As quoted by Whitaker, the brain, Hyman wrote, begins to function in a manner “qualitatively as well as quantitatively different from the normal state.” After several weeks on psychoactive drugs, the brain’s compensatory efforts begin to fail, and side effects emerge that reflect the mechanism of action of the drugs. For example, the SSRIs may cause episodes of mania, because of the excess of serotonin. Antipsychotics cause side effects that resemble Parkinson’s disease, because of the depletion of dopamine (which is also depleted in Parkinson’s disease). As side effects emerge, they are often treated by other drugs, and many patients end up on a cocktail of psychoactive drugs prescribed for a cocktail of diagnoses. The episodes of mania caused by antidepressants may lead to a new diagnosis of “bipolar disorder” and treatment with a “mood stabilizer,” such as Depokote (an anticonvulsant) plus one of the newer antipsychotic drugs. And so on.

Some patients take as many as six psychoactive drugs daily. One well- respected researcher, Nancy Andreasen, and her colleagues published evidence that the use of antipsychotic drugs is associated with shrinkage of the brain, and that the effect is directly related to the dose and duration of treatment. As Andreasen explained to The New York Times, “The prefrontal cortex doesn’t get the input it needs and is being shut down by drugs. That reduces the psychotic symptoms. It also causes the prefrontal cortex to slowly atrophy.”*

Getting off the drugs is exceedingly difficult, according to Whitaker, because when they are withdrawn the compensatory mechanisms are left unopposed. When Celexa is withdrawn, serotonin levels fall precipitously because the presynaptic neurons are not releasing normal amounts and the postsynaptic neurons no longer have enough receptors for it. Similarly, when an antipsychotic is withdrawn, dopamine levels may skyrocket. The symptoms produced by withdrawing psychoactive drugs are often confused with relapses of the original disorder, which can lead psychiatrists to resume drug treatment, perhaps at higher doses.

Unlike the cool Kirsch, Whitaker is outraged by what he sees as an iatrogenic (i.e., inadvertent and medically introduced) epidemic of brain dysfunction, particularly that caused by the widespread use of the newer (“atypical”) antipsychotics, such as Zyprexa, which cause serious side effects. Here is what he calls his “quick thought experiment”:

Imagine that a virus suddenly appears in our society that makes people sleep twelve, fourteen hours a day. Those infected with it move about somewhat slowly and seem emotionally disengaged. Many gain huge amounts of weight—twenty, forty, sixty, and even one hundred pounds. Often their blood sugar levels soar, and so do their cholesterol levels. A number of those struck by the mysterious illness—including young children and teenagers—become diabetic in fairly short order…. The federal government gives hundreds of millions of dollars to scientists at the best universities to decipher the inner workings of this virus, and they report that the reason it causes such global dysfunction is that it blocks a multitude of neurotransmitter receptors in the brain—dopaminergic, serotonergic, muscarinic, adrenergic, and histaminergic. All of those neuronal pathways in the brain are compromised. Meanwhile, MRI studies find that over a period of several years, the virus shrinks the cerebral cortex, and this shrinkage is tied to cognitive decline. A terrified public clamors for a cure.

Now such an illness has in fact hit millions of American children and adults. We have just described the effects of Eli Lilly’s best-selling antipsychotic, Zyprexa.

If psychoactive drugs are useless, as Kirsch believes about antidepressants, or worse than useless, as Whitaker believes, why are they so widely prescribed by psychiatrists and regarded by the public and the profession as something akin to wonder drugs? Why is the current against which Kirsch and Whitaker and, as we will see, Carlat are swimming so powerful? I discuss these questions in Part II of this review.

—This is the first part of a two-part article.

-

*

See Claudia Dreifus, “Using Imaging to Look at Changes in the Brain,” The New York Times, September 15, 2008. ↩